Daily AI Digest — 2026-05-08

arXiv Highlights

Lightning Unified Video Editing via In-Context Sparse Attention

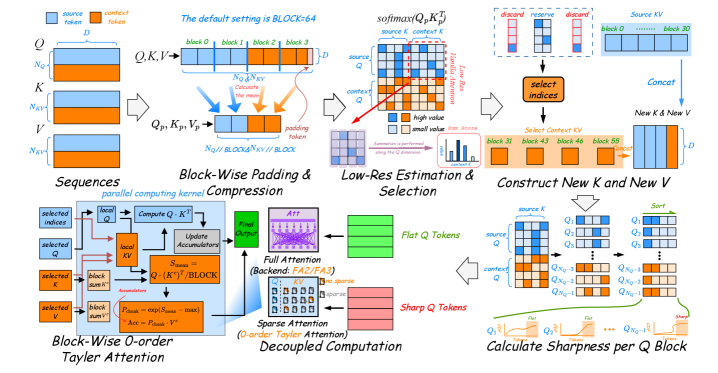

In-context learning (ICL) has become a unifying framework for video editing: the source video and a “context” (e.g., an edited reference frame, instruction-conditioned exemplar) are concatenated and fed jointly through a video diffusion transformer. The price is quadratic attention over a doubled token budget. This paper introduces In-context Sparse Attention (ISA), a structured sparsification scheme that exploits the asymmetry between source and context tokens, and packages it into LIVEditor, a Wan 2.2 post-trained video editor.

Two empirical observations driving the design

The authors decompose the ICL attention matrix into four blocks: Q^{\text{src}}(K^{\text{src}})^\top, Q^{\text{src}}(K^{\text{ctx}})^\top, Q^{\text{ctx}}(K^{\text{src}})^\top, Q^{\text{ctx}}(K^{\text{ctx}})^\top.

The source–source block dominates, and the gap to source–context grows with depth. This motivates aggressive pruning of context Keys/Values rather than uniform sparsification. The second observation is theoretical: the error of a 0-th order Taylor expansion of softmax attention correlates with the sharpness of the Query distribution. Sharp queries (peaked attention) tolerate sparse approximation poorly; flat queries can be approximated cheaply.

Method

ISA operates on block-partitioned tokens of block size L_Q, L_K, with compressed representations Q^c, K^c, V^c obtained by sequence-axis pooling. The pipeline has three stages.

1. Context pre-selection. From the coarse score matrix S_{\text{coarse}}\in\mathbb{R}^{B\times H\times N_Q\times N_K}, the slice corresponding to source-query × context-key entries, S^{\text{ctx}}_{\text{coarse}}, is averaged over the source-query axis and Top-k selected:

I_{\text{topk}} = \text{TopK}(\text{Mean}(S^{\text{ctx}}_{\text{coarse}}, \text{axis}=2), \text{axis}=2).

The retained context KV blocks are gathered and concatenated with the full source KV:

K_{\text{new}} = [K^{\text{src}}; \text{Gather}(K^{\text{ctx}}, I_{\text{topk}})], \quad V_{\text{new}} = [V^{\text{src}}; \text{Gather}(V^{\text{ctx}}, I_{\text{topk}})].

A hyperparameter \alpha_s (default 0.125) controls the fraction of context blocks retained — i.e., 87.5% of context KV is dropped before any attention is computed.

2. Block-wise 0-th order Taylor sparse attention. For low-sharpness query blocks, ISA replaces softmax attention with a 0-th order Taylor approximation around a block-mean reference, which reduces to a cheap linear aggregation that the authors implement as a dedicated sparse kernel. The approximation error is bounded by the Query-block sharpness; the proof is in the appendix but the take-away is that flat queries are essentially free.

3. Dynamic query grouping. Per query block, sharpness is computed from the coarse scores; high-sharpness blocks (fraction \alpha_f=0.5) are routed to full FlashAttention-2, while the remainder go to the 0-th order Taylor kernel. A second ratio \alpha_{ns}=0.0625 further controls KV sparsity for the non-sharp group. This produces two execution paths whose total cost is dominated by the sparse kernel, with negligible overhead from selection and gather operations.

LIVEditor and data

LIVEditor is built by post-training the high-noise branch of Wan 2.2 on a curated 1.7M-sample dataset. The pipeline uses Gemini 2.5 Flash for caption and instruction synthesis, Gemini 2.5 Image Preview to render edited initial frames, and propagates edits temporally via pose-guided TI2V for humans and attention injection for non-human subjects. Public sources (Ditto, LoVoRA, ReCo) supplement the non-human portion. Training uses two stages: 1.7M samples at lr 1e{-5}, then 0.089M high-quality samples at lr 1e{-6}, both with batch 16 under ZeRO-3 Offload. A consistent role assignment — synthetic frames as context, real frames as source — mitigates artifact leakage from synthetic data.

Results

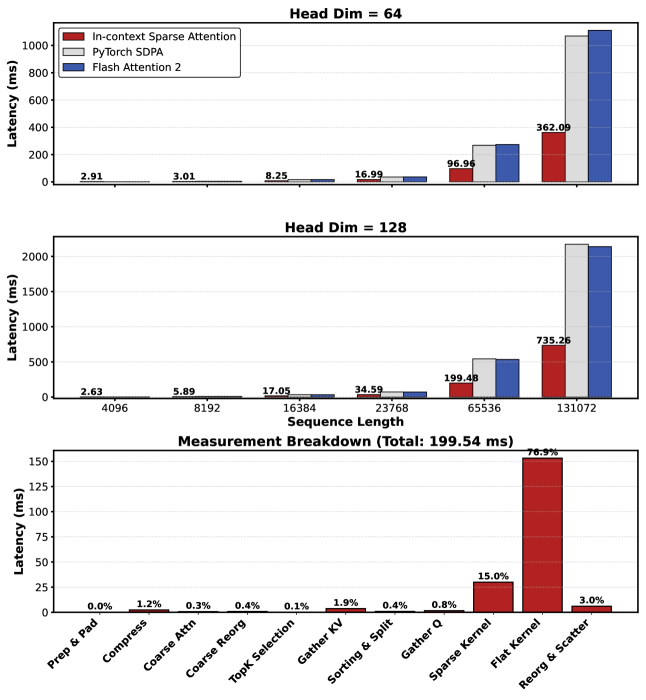

LIVEditor surpasses prior state-of-the-art on EditVerseBench, IVE-Bench, and VIE-Bench. The headline efficiency claim is roughly 60% reduction in attention-module latency relative to dense attention, with Figure 2 showing the speedup over both SDPA and FA2 widening monotonically with sequence length — the regime that matters for ICL editing where token counts double. Ablations on EditVerseBench and FiVE-Bench show ISA matches or exceeds full attention quality at the chosen (\alpha_s, \alpha_{ns}, \alpha_f) = (0.125, 0.0625, 0.5), contradicting the usual quality–sparsity tradeoff and supporting the authors’ claim that context tokens are largely redundant.

Limitations and open questions

The pre-selection assumes context tokens are systematically lower-saliency than source tokens; this is validated for ICL editing but unlikely to hold for tasks where the context carries fine-grained spatial information that source frames lack (e.g., long-range identity reference, multi-shot consistency). The sharpness-based router uses fixed ratios rather than thresholds, so adaptation to varying scene complexity is coarse. The 0-th order Taylor approximation error bound is sharpness-dependent but not data-distribution-dependent — empirical equivalence to full attention may degrade outside the training regime. Finally, gains are reported on attention-module latency; end-to-end diffusion sampling latency reductions depend on how attention dominates the schedule at the chosen resolution.

Why this matters

ICL is becoming the default interface for controllable video editing, but it doubles attention cost on top of an already expensive video DiT. ISA shows that the source/context asymmetry is exploitable structurally — not just empirically — and that combining KV-side pre-selection with query-side sharpness routing yields near-lossless 60% attention speedups, which is the right shape of optimization for this regime.

Source: https://arxiv.org/abs/2605.04569

Stream-R1: Reliability-Perplexity Aware Reward Distillation for Streaming Video Generation

Problem

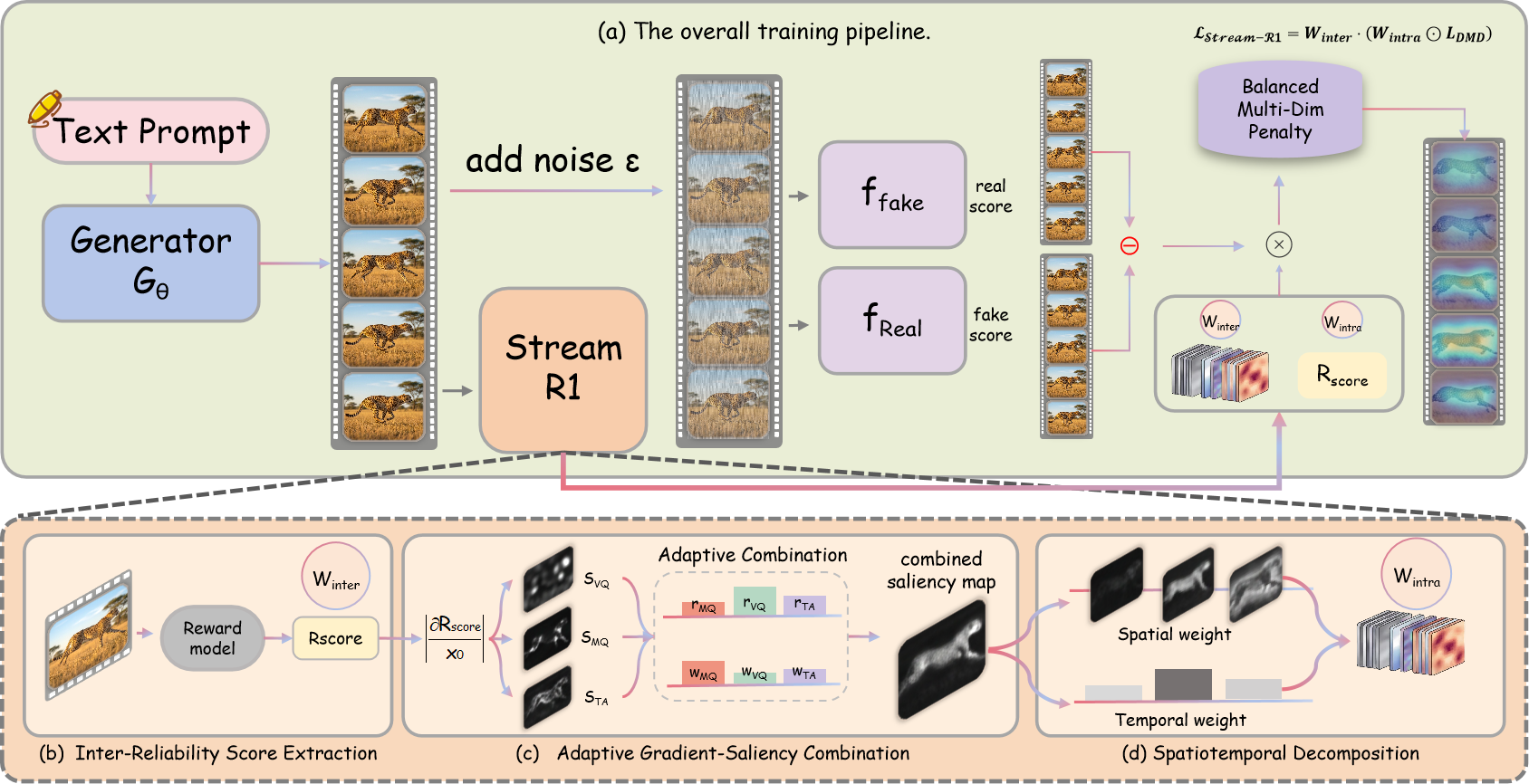

Distribution Matching Distillation (DMD) is the workhorse for compressing autoregressive streaming video diffusion models into few-step students, but the standard objective treats every rollout, every frame, and every pixel as equally informative supervision. The authors argue this uniform weighting conflates two distinct decisions: whether a given student rollout deserves to be learned from at all, and where within that rollout the gradient should concentrate. Both axes carry real variance — some student samples are simply unreliable (the teacher-student score gap is noisy or misleading), and within any reliable sample, perplexity is concentrated in specific regions and frames (motion boundaries, late frames in autoregressive rollouts, etc.). For long-horizon streaming generation where errors compound, ignoring this structure caps the achievable quality of the distilled student.

Method

Stream-R1 is built on top of Reward Forcing with Wan2.1-T2V-1.3B as the student and Wan2.1-T2V-14B as the teacher, generating 5s videos at 832\times 480. The base DMD gradient is the standard score difference

\mathbf{g} = f_{\text{fake}}(\mathbf{x}_t, c) - f_{\text{real}}(\mathbf{x}_t, c),

with loss \mathcal{L}_{\text{DMD}} = \tfrac{1}{2}\|\mathbf{x}_0 - \text{sg}(\mathbf{x}_0 - \hat{\mathbf{g}})\|^2. Stream-R1 reshapes this into

\mathcal{L}_{\text{Stream-R1}} = \mathbf{W}_{\text{inter}} \cdot (\mathbf{W}_{\text{intra}} \odot \mathcal{L}_{\text{DMD}}),

where a single shared reward model drives both weights.

The pipeline has four components:

Inter-Reliability score extraction. A pretrained reward model evaluates the fake rollout along three axes — Visual Quality (VQ), Motion Quality (MQ), and Text Alignment (TA) — yielding a scalar R_{\text{score}} (the “Overall” mode averages the three). The inter-rollout weight is an exponential rescaling w_{\text{inter}} \propto \exp(\beta R_{\text{score}}) with inverse temperature \beta = 2.0. High-reward rollouts dominate the gradient; unreliable ones are downweighted rather than discarded.

Adaptive gradient-saliency combination. For each axis, gradients of the reward w.r.t. the pixel-level latent give a saliency map s_{\text{VQ}}, s_{\text{MQ}}, s_{\text{TA}}. These are fused adaptively (temperature \tau = 1.0) into a unified saliency that highlights spatiotemporal positions where reward sensitivity is highest — i.e., where loss reduction will most improve perceived quality.

Spatiotemporal decomposition. The unified map is factorized into separable spatial and temporal weight vectors with floors \sigma_{\min} = 0.15 and \tau_{\min} = 0.20. The floors prevent any region or frame from being completely zeroed, which would otherwise cause representational drift in regions the reward model happens to ignore. The product yields \mathbf{W}_{\text{intra}}.

Balanced multi-dimensional reward ensures none of VQ/MQ/TA dominates the saliency fusion.

Training uses 1,000 optimizer steps on 8×A100 with effective batch 64, AdamW at 2.0\times 10^{-6} for G_\theta and 4.0\times 10^{-7} for f_{\text{fake}}, generator updated every 5 steps, EMA decay 0.99 from step 200. Denoising uses 4 steps at timesteps [1000, 750, 500, 250] with chunked attention window 9 over 3-frame latent chunks. Total wall time ≈56 hours.

Results

The qualitative side-by-side against the Reward Forcing baseline shows the most visible gains on long autoregressive rollouts, where the baseline accumulates motion artifacts and texture decay while Stream-R1 maintains sharper structure and more coherent motion across chunks.

The provided sections do not include a quantitative table, but the configuration is fully specified: \beta = 2.0 for inter-reliability sharpness, \tau = 1.0 for saliency fusion, and the (\sigma_{\min}, \tau_{\min}) = (0.15, 0.20) floors are the key hyperparameters that trade off focused optimization against coverage. The fact that a single reward model drives both weights, rather than two separately learned modulators, is the main ergonomic claim — the same scalar R_{\text{score}} that gates rollout admission also produces, via gradients, the spatiotemporal mask.

Limitations and open questions

The framework inherits the reward model’s biases — if the VQ/MQ/TA model under-rewards certain motion patterns, those regions receive systematically smaller gradients, and the spatial/temporal floors only partially mitigate this. The exponential reweighting with \beta = 2.0 is aggressive; effective batch size after w_{\text{inter}} rescaling can collapse onto a few high-reward rollouts, which is reminiscent of importance-sampling variance issues in RL. The separable spatial × temporal factorization is a strong assumption: it cannot represent saliency that is jointly localized in space and time (e.g., a moving object). The selected sections also do not report VBench or user-study numbers, so the magnitude of improvement over Reward Forcing and CausVid is not quantified here. Finally, the method is demonstrated only at the 1.3B-student / 14B-teacher scale on 5s clips; whether the reliability-perplexity decomposition holds for longer horizons or larger students is open.

Why this matters

Stream-R1 reframes DMD distillation as a two-level credit assignment problem and shows that a single reward model can simultaneously gate which rollouts to trust and where within them to push gradients. This is a clean generalization of reward-guided distillation that should transfer to other generative-distillation regimes where supervision quality varies across samples and within samples.

Source: https://arxiv.org/abs/2605.03849

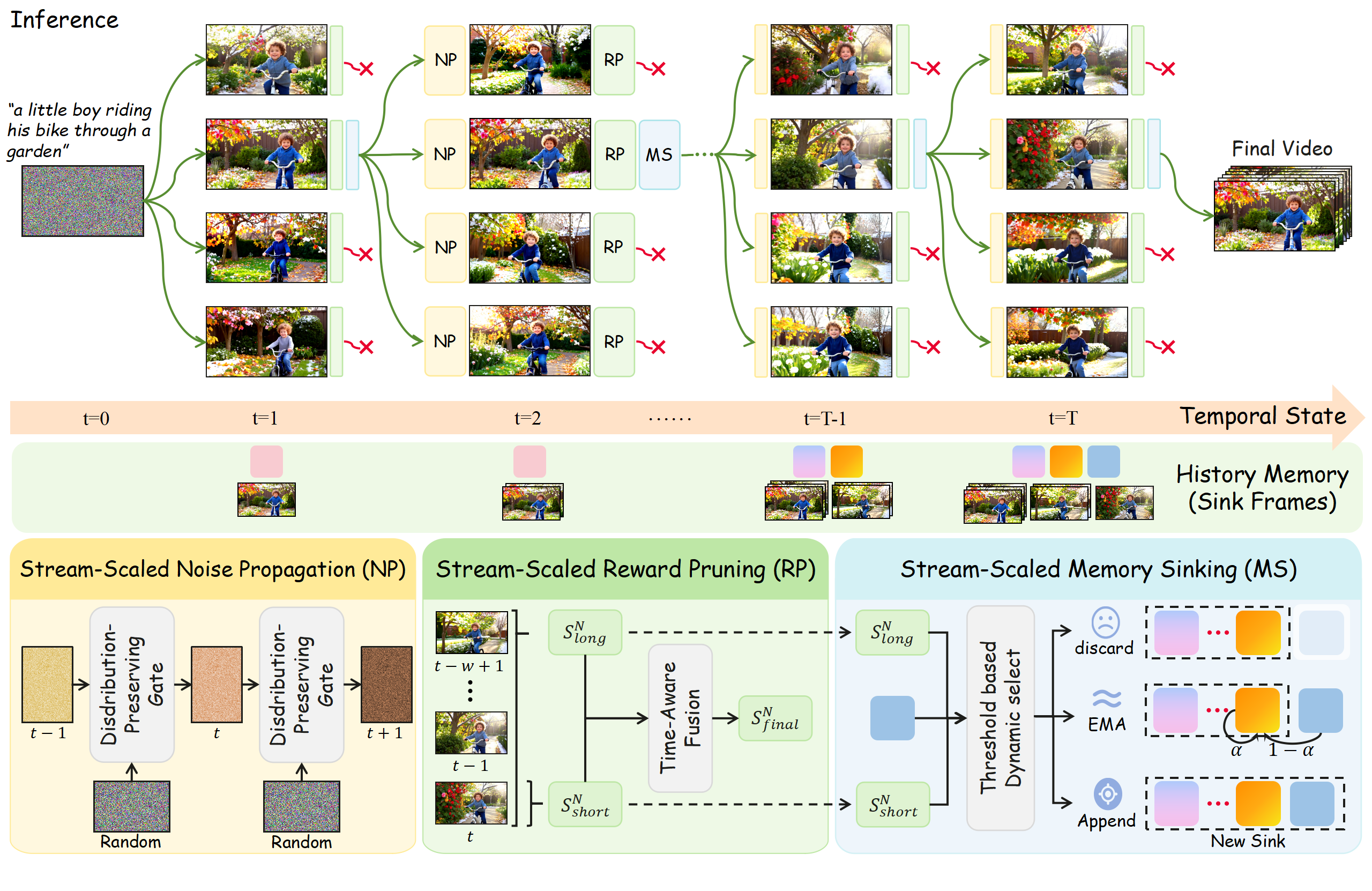

Stream-T1: Test-Time Scaling for Streaming Video Generation

Test-time scaling (TTS) for video generation has so far inherited the diffusion-model template: sample many full-trajectory candidates, score them with a reward model, keep the best. For video this is doubly expensive — each candidate is a full denoising trajectory over many frames — and the reward signal arrives only after the entire video is synthesized, providing no temporal guidance during generation. Stream-T1 reframes TTS around streaming (chunk-autoregressive) video diffusion, where generation is already factorized into short chunks denoised in few steps. This factorization is what makes TTS tractable: search can be performed per chunk rather than per full video, and reward feedback can be injected between chunks.

Setup

The base model is LongLive (built on Wan2.1-T2V-1.3B), which factorizes the joint distribution as

p_\theta(x^{1:N}\mid c) = \prod_{i=1}^{N} p_\theta(x^i \mid x^{<i}, c),

with each conditional realized by a few-step denoising diffusion module G_\theta over a chunk of latents. KV-cache uses an attention window of 9 chunks plus a sink of 3. Stream-T1 wraps this with a beam search over chunks, with three components active at every chunk boundary.

Stream-Scaled Noise Propagation

Standard streaming generation initializes each chunk’s latent noise i.i.d. from \mathcal{N}(0,I), discarding any prior about which noise seeds led to high-reward continuations. Stream-T1’s first stage conditions the noise sampling for chunk n on the noise trajectories of the surviving (high-reward) candidates from chunk n{-}1. The motivation is that under the few-step denoising regime of streaming models, the initial noise is far from a nuisance variable — it materially shapes motion continuity and identity preservation across the chunk boundary. By propagating “historically proven” Gaussian priors instead of resampling i.i.d., the search establishes an explicit temporal dependency in the noise space, biasing exploration toward trajectories that already produced coherent dynamics.

Stream-Scaled Reward Pruning

After each chunk is fully denoised, candidates are scored by a Long-Short Combined Reward that targets both local aesthetics and global coherence:

- Short-sequence (frame/chunk-level) reward: image reward models such as HPSv3, ImageReward, and MHP evaluate spatial aesthetics and visual fidelity.

- Long-sequence reward: video reward models — VisionReward, VideoAlign, VideoLLaMA3 — applied over a sliding window of 10 chunks to score temporal coherence and global dynamics.

Suboptimal branches are pruned, leaving only the surviving beams whose noise and KV-cache state propagate to the next chunk. The dual-level reward addresses a known failure mode of pure frame-level scoring (locally pretty but temporally inconsistent) while keeping the long-range scorer’s context bounded by the sliding window.

Stream-Scaled Memory Sinking

The third stage targets the KV-cache eviction policy. With a window of 9 and a sink of 3, naive FIFO eviction discards context that may carry critical long-horizon semantics (subject identity, scene layout). Stream-T1 routes evicted context into different update pathways based on semantic boundary detection, and the routing decision is modulated by the reward score of the chunk it came from. Concretely, high-reward, semantically anchoring context is preserved in the sink, while transient context is allowed to evict normally. This couples the search signal back into the model’s long-term memory rather than letting reward influence stop at pruning.

Results

The paper reports experiments on LongLive/Wan2.1-T2V-1.3B at 832×480, 16 FPS, seed 42, evaluated against Causvid, Self-Forcing, and LongLive. Qualitative comparisons (Figure 3) show that Stream-T1 improves both per-frame fidelity and cross-chunk temporal consistency relative to the streaming baselines; the abstract describes the gains as substantial across both axes, though specific aggregate metric numbers were not present in the provided sections.

Limitations and open questions

- The provided sections describe the framework and qualitative behavior but do not enumerate quantitative VBench-style scores or compute overhead versus baseline LongLive; beam search over chunks multiplies inference cost by the beam width, and the actual wall-clock trade-off is not pinned down here.

- The Long-Short Combined Reward depends on the calibration of heterogeneous reward models (HPSv3, VisionReward, VideoAlign, VideoLLaMA3); reward hacking and inter-model disagreement are not analyzed.

- Noise propagation assumes the optimal noise from chunk n{-}1 is informative for chunk n. At hard scene cuts or prompt switches this prior may actively hurt; the memory-sinking module’s semantic boundary detector is the implicit safeguard, but its robustness is not characterized.

- The method is tied to few-step streaming diffusion; whether the noise-propagation gain survives at higher denoising step counts (where initial noise matters less) is open.

Why this matters

Streaming video diffusion already pays a structural cost — chunked autoregression — that diffusion-based TTS treated as an obstacle. Stream-T1 inverts this: chunked, few-step generation is exactly the regime where per-step beam search with reward feedback is affordable and where initial-noise choice carries enough signal to be worth searching over. This is a more honest fit between TTS and the underlying generative process than retrofitting candidate-exploration onto monolithic video diffusion.

Source: https://arxiv.org/abs/2605.04461

RLDX-1 Technical Report

RLDX-1 is a Vision-Language-Action (VLA) model targeting dexterous manipulation, built around a Multi-Stream Action Transformer (MSAT) that fuses heterogeneous robot modalities (vision, language, proprioception, torque, tactile) via modality-specific streams joined by cross-modal self-attention. The work argues that current frontier VLAs (e.g. \pi_{0.5}, GR00T N1.6) inherit strong scene understanding from pre-trained VLMs but lack three concrete capabilities required for real-world dexterous tasks: motion awareness across frames, memory-aware decision making over long horizons, and physical sensing (force/tactile). RLDX-1 is the system-level answer combining architecture, data synthesis, multi-stage training, and inference-graph optimization.

Architecture

The backbone is a temporally aware VLM derived from Qwen3-VL 8B. To address its lack of embodied grounding, the authors fine-tune it on a robot-specific VQA corpus with three slices: (i) end-effector–object spatial relations, (ii) intermediate subtask labels, and (iii) low-level action descriptors. Following GR00T-style practice, action-relevant features are extracted from an intermediate transformer layer rather than the final layer, since later layers specialize for language generation. A small set of learned cognition tokens summarize the VLM state into a compact representation passed to the action model.

The action model itself is the MSAT (Figure 3): each modality (vision/language cognition, proprioception, motion features, memory tokens, physics-stream torque/tactile) gets its own token stream with modality-specific projections, then all streams attend jointly through shared self-attention layers, with action chunks decoded via flow matching. A Motion Module supplies multi-frame temporal context for motion awareness, a Memory Module retains compressed past observations for long-horizon reasoning, and a Physics Stream ingests joint torque and fingertip tactile signals.

Training data and procedure

Pre-training uses ~1.5M episodes spanning single-arm grippers, dual-arm grippers, and humanoid dexterous hands: Open-X-Embodiment (870K), DROID (92K), Galaxea Open-World (114K), AgiBot World G/H (239K/36K), Fourier ActionNet (30K), Humanoid Everyday (9K), plus 150K synthetic GR-1 humanoid episodes generated by an in-house pipeline that targets rare manipulation scenarios. Visual preprocessing exploits Qwen3-VL’s native-resolution encoder and caps frames at 64\times 64 vision tokens while preserving aspect ratio.

Training proceeds in three stages: (1) flow-matching pre-training on the multi-embodiment mix to learn embodiment-agnostic action priors; (2) embodiment-specific mid-training that introduces the Memory and Physics modules and develops expert skills on ALLEX humanoid and tactile-augmented Franka Research 3 (FR3); (3) task-specific post-training for SOTA on individual benchmarks. The flow-matching objective predicts a velocity field v_\theta(a_t, t \mid o) along an interpolation a_t = (1-t)\epsilon + t\, a between Gaussian noise and the target action chunk.

Inference optimization

Because closed-loop control degrades with latency in dynamic scenes, the authors push inference through two layers of optimization. At the graph level, both PyTorch eager and torch.compile fragment the forward pass into multiple CUDA Graph subgraphs, leaving residual kernel-launch overhead that dominates the runtime of an 8B-VLM-based policy. They convert the model to a single static graph so the entire forward pass is captured as one CUDA Graph, eliminating launch overhead end-to-end. They additionally optimize at the kernel level (Section 5.2) to reduce intra-kernel inefficiency.

Results

The paper claims RLDX-1 “consistently outperforms” \pi_{0}-FAST, \pi_{0}, \pi_{0.5}, GR00T N1.5, and GR00T N1.6 across simulation benchmarks (Section 6.1) and a real-world OpenArm humanoid benchmark (Section 6.2). Mid-trained variants are evaluated on ALLEX and FR3 tasks specifically requiring motion awareness, long-term memory, and physical sensing (Sections 6.3–6.4). Ablations (Section 6.5) isolate contributions of MSAT vs. cross-attention fusion, the post-training stage, and the static-graph inference path. The provided excerpts list baselines and the dataset table but do not include the numeric headline scores; the abstract’s claim is qualitative (“consistently outperforms”), so concrete win margins must be read from the full tables in Section 6.

Limitations and open questions

Several issues are visible. First, the arxiv id and metadata appear inconsistent with a 2025-era technical report citing Qwen3-VL, GR00T N1.6, and \pi_{0.5} — readers should treat this as a system report rather than a peer-reviewed result. Second, the synthetic data pipeline is humanoid-GR-1-specific (150K episodes); transfer to other dexterous platforms is not characterized. Third, the static-graph conversion presumes fixed shapes, which constrains variable-length memory or variable-frame Motion Module inputs at deployment. Fourth, no mention is made of failure modes when tactile/torque streams are noisy or partially missing, which is the realistic regime for FR3-style retrofits. Finally, the multi-stream joint-attention design is more parameter- and compute-heavy than cross-attention adapters used by GR00T N1.5/1.6; the latency-vs-capability trade-off relative to those baselines under matched inference budget is not reported in the excerpt.

Why this matters

RLDX-1 is a concrete instantiation of the thesis that closing the gap to general dexterous manipulation requires augmenting VLA backbones with explicit motion, memory, and physical-sensing pathways rather than scaling a vision-language stack alone. The static-graph CUDA-Graph inference recipe is independently useful for anyone deploying 8B-class VLM policies in closed-loop control.

Source: https://arxiv.org/abs/2605.03269

OpenSearch-VL: An Open Recipe for Frontier Multimodal Search Agents

Problem

Multimodal “deep search” agents — VLMs that interleave reasoning with image search, text search, OCR, cropping, and other visual tools to answer knowledge-intensive visual questions — have become a benchmark frontier (BrowseComp-VL, MMSearch, LiveVQA, InfoSeek). The strongest systems (GPT-5, Gemini-2.5-Pro) remain closed, and prior open attempts suffer from two structural data problems: (i) prompts that the base VLM can solve in a single forward pass, leading to one-step retrieval collapse, and (ii) shortcut leakage where the answer entity name appears in the question, defeating multi-hop tool use. OpenSearch-VL addresses these by releasing a complete pipeline: data construction, tool environment, SFT corpus (SearchVL-SFT-36k), RL corpus (SearchVL-RL-8k), and a multi-turn RL algorithm.

Data construction

The data pipeline is the most novel piece.

Wikipedia is treated as a directed graph \mathcal{G}=(\mathcal{V},\mathcal{E}) with articles as nodes and in-article hyperlinks as edges. A constrained random walk of length h\in\{2,3,4\} produces a path

P = (v_0 \xrightarrow{\rho_1} v_1 \xrightarrow{\rho_2} \cdots \xrightarrow{\rho_h} v_h),

skipping disambiguation pages, cycles, and hub nodes whose in-degree exceeds \tau_{\text{hub}}. Each node receives a functional role: v_0 is the visual anchor (replaced by a referring expression grounded in a representative image), v_1,\dots,v_{h-1} are bridge entities (names fuzzified), and v_h is the answer node. GPT-4o synthesizes a canonical question q_t that references v_h only via the queried attribute, then a fuzzy rewrite q_f removes entity-name shortcuts while fixing answer a. The deliberate decoupling of the visual anchor from the answer entity is what kills single-shot retrieval. Staged filtering then discards samples solvable without tools, and an enhancement pass degrades images (blur, low-res, perspective skew) so that tools like sharpening, super-resolution, and perspective correction become necessary — encouraging think-with-image behavior. Trajectories are synthesized in the actual tool environment and rejection-sampled with both an answer-correctness judge and a process-level judge, yielding 36,592 SFT trajectories. The 8k RL pool is drawn disjointly from the same VQA pool.

Training

SFT factorizes each step’s action a_l = [z_l, c_l] (reasoning trace + tool call) autoregressively:

P_\theta(a_l \mid h_l) = P_\theta(z_l \mid h_l)\, P_\theta(c_l \mid h_l, z_l),

with retrieved tool observations o_l masked out of the loss (following Jin et al. 2025), so the model is supervised only on its own reasoning and actions.

The RL stage uses GRPO over multi-turn rollouts in the live tool environment with a composite reward r = r_{\text{acc}} + r_{\text{query}} + r_{\text{fmt}} (final-answer correctness, process-level query quality, and format check). The key algorithmic contribution is fatal-aware token masking: when a trajectory hits a fatal error (malformed tool call, repeated identical query, environment failure) at step k, tokens after k are masked, and one-sided advantage clamping is applied so that early valid reasoning steps in an eventually-fatal trajectory are not penalized. This contrasts with hard-masking the entire trajectory.

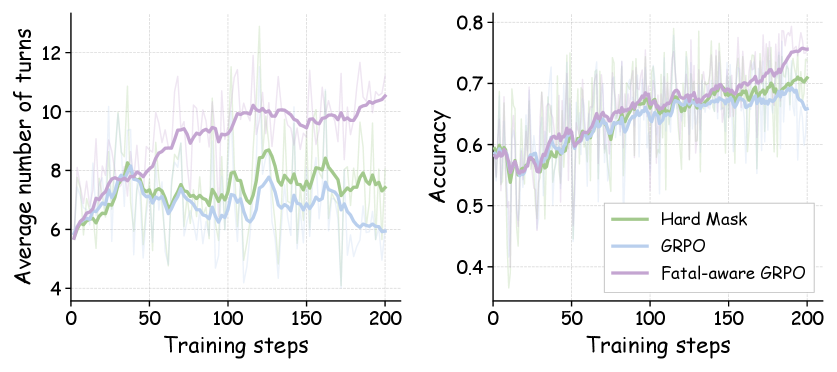

The dynamics plot shows that vanilla GRPO collapses to short rollouts (turn count drops as the policy learns to terminate early to avoid fatal penalties), while fatal-aware GRPO maintains higher turn counts and reaches strictly higher batch accuracy.

Results

On seven benchmarks (SimpleVQA, VDR, MMSearch, LiveVQA, BrowseComp-VL, FVQA, InfoSeek), reported as Pass@1 with a GPT-4o judge:

- Direct-reasoning baselines: Qwen3-VL-32B averages 31.6, GPT-4o 29.5, GPT-5 45.1, Gemini-2.5-Pro 46.0.

- RAG (single-pass retrieval): GPT-5 reaches 53.6, Qwen3-VL-8B 40.7.

- Open agentic baselines: MMSearch-R1-7B averages 42.4; Visual-ARFT-7B 28.8.

The Qwen3-VL-8B base jumps from a direct-reasoning average of 22.3 to substantially higher numbers post-OpenSearch-VL training (paper reports a frontier-competitive average; e.g., the 8B model under their recipe is positioned against GPT-5/Gemini-2.5-Pro in the agentic block). Particularly notable: on BrowseComp-VL — the hardest multi-hop visual web search benchmark where GPT-4o gets 5.5 and even GPT-5 RAG gets 54.9 — the agentic recipe closes most of the gap from a 24.1 base. On VDR, where direct reasoning peaks at 9.8 (GPT-5) and most open agents stay below 8, the recipe pushes substantially higher via the staged-filtered, tool-demanding training distribution.

Limitations and open questions

- The judge is GPT-4o, which is itself a non-trivial source of variance and a soft ceiling on long-tail entity correctness.

- The 200-step RL run on 64 H20s is short; whether scaling RL further continues to lift BrowseComp-VL or saturates is unresolved.

- Wikipedia-derived paths bias toward English-encyclopedic entities; performance on non-Wikipedia long-tail visual entities (product photos, scientific figures) is not stress-tested.

- Fatal-aware masking depends on a hand-defined fatal-error taxonomy; learned fatal detection is left open.

- The process-level reward r_{\text{query}} relies on a query-quality judge whose calibration is not deeply ablated.

Why this matters

This is the first end-to-end open recipe — data synthesis pipeline, 36k+8k datasets, tool environment, SFT and a working multi-turn RL algorithm — that brings a 7B-30B open VLM into the agentic regime where it competes with GPT-5 and Gemini-2.5-Pro on multimodal deep search. The Wikipedia-graph-with-functional-roles construction and fatal-aware GRPO are directly reusable components for anyone training tool-using VLMs.

Source: https://arxiv.org/abs/2605.05185

PhysForge: Generating Physics-Grounded 3D Assets for Interactive Virtual World

Problem

Most image-to-3D generators produce static meshes optimized for visual fidelity but lacking the structural decomposition, material assignments, and joint definitions required by physics simulators or embodied-AI environments. Even part-aware methods such as OmniPart stop at geometry, leaving downstream pipelines to hand-author kinematics. PhysForge targets the joint generation of (i) part-segmented geometry, (ii) per-part physical properties (material, mass, function, affordance), and (iii) articulation parameters (joint type, axis origin, direction, limits) from a single image, with the explicit goal of producing simulation-ready URDF-like assets.

Dataset: PhysDB

The authors construct PhysDB by filtering Objaverse to 150k objects with non-trivial part structure across seven categories (household, industrial, weapons, personal, vehicles, tech/electronics, cultural). Annotations are organized in four tiers:

- Holistic: real-world scale, category, scene context.

- Static/semantic: per-part semantic label, material, mass.

- Functional: intrinsic function (e.g., “to contain”) and discrete state machine (e.g., Button: {pressed, released}), following OAKINK2.

- Interactive: atomic affordances (pushable, rotatable, …) and, for movable parts, parent linkage plus joint type ∈ {revolute, continuous, prismatic, fixed}.

A VLM produces initial labels from rendered whole-object and per-part views; humans correct them. PhysDB does not provide numerical joint axes at scale (deemed unreliable for arbitrary categories), so PartNet-Mobility and Infinite-Mobility are mixed in as articulation supervision.

Method

PhysForge is a two-stage decoupled pipeline.

Stage 1 — VLM Planner. A VLM ingests the input image and emits a “Hierarchical Physical Blueprint”: a tree of parts with bounding boxes, materials, masses, functions, affordances, parent indices, and joint types. This is a structured planning step rather than a free-form caption — the bounding-box layout doubles as spatial conditioning for Stage 2, and the discrete physical fields condition material/kinematic heads.

Stage 2 — Physics-Grounded Diffusion with KineVoxel Injection. A diffusion model generates a voxel/latent representation of geometry and texture conditioned on the blueprint. The novelty is the KineVoxel Injection (KVI) mechanism, which fuses kinematic conditioning (joint-type embedding plus a kinetics encoder over part voxels) into the denoiser so that geometry generation and articulation-parameter regression share features. Two ablated variants — “w/o joint type emb” and “w/o kinetics enc” — isolate the contribution of each KVI branch.

The model jointly outputs per-part geometry and continuous joint parameters (axis direction, pivot/origin, limits), making the asset directly exportable to a simulator.

Results

Part structure planning (PartObjaverse-Tiny). Using BBox IoU, Voxel Recall, and Voxel IoU, the full PhysForge sets a new SoTA. The informative ablation is row-wise: “PhysForge-bbox” (trained only on 500k part bounding boxes, no physics labels) underperforms “PhysForge w/o mask” (with physics conditioning, no 2D mask). The physics-conditioned model without any mask input still beats OmniPart’s first stage given SAM-derived masks. This indicates that semantic/physical labels act as a strong prior on plausible part decompositions — the model learns that, e.g., a kettle has a lid, spout, body, and handle even when nothing in the image segmentation suggests it.

Articulated object generation (Table 4, 340 PartNet-/Infinite-Mobility objects). Against Articulate Anything, Singapo, and URDFormer:

- Chamfer Distance: PhysForge 10.21 vs. Singapo 21.10, Articulate Anything 23.31, URDFormer 25.42 (≈2× lower).

- CLIP-Sim: 0.93 vs. 0.85–0.87.

- Joint-Axis-Err@5: 0.101 vs. Singapo 0.241, Articulate Anything 0.608.

- Joint-Pivot-Err@5: 0.071 vs. Singapo 0.153.

- Joint-Axis-Err-all / Pivot-all: 0.164 / 0.096 (Articulate Anything 0.694 / 0.197).

Ablations show both KVI components matter: removing joint-type embeddings raises Joint-Axis-Err@5 from 0.101 to 0.157; removing the kinetics encoder raises it to 0.158. Removing the kinetics encoder hurts axis prediction more than pivot prediction (axis-all 0.204 vs. 0.164), consistent with the encoder providing geometric context for axis direction inference.

Limitations

- Articulation supervision relies on PartNet-Mobility/Infinite-Mobility, which are dominated by furniture, appliances, and tools; generalization to weapons, vehicles with complex linkages, or soft/multi-DoF mechanisms is unverified.

- PhysDB does not annotate numerical joint axes — the VLM planner only emits joint types, so axis/pivot accuracy depends entirely on what the diffusion stage can infer from the smaller curated articulation corpus.

- Reported metrics are geometric and kinematic; no closed-loop simulation evaluation (e.g., contact stability, manipulation success rates) is shown.

- Mass and material values are categorical/coarse; no calibration against physical measurements.

- The “w/o mask” ablation is strong, but the headline numbers benefit from blueprint-derived bounding boxes that themselves depend on VLM quality — error propagation from Stage 1 to Stage 2 is not quantified.

Why this matters

Decoupling physical planning (a discrete, semantic problem well-suited to VLMs) from physical realization (a continuous geometric/kinematic problem suited to diffusion) is a clean factorization for simulation-ready asset synthesis, and the ~2× CD improvement plus halving of joint-axis error over prior articulation pipelines suggests the factorization pays off. If PhysDB is released, the four-tier annotation schema could become a reference format for embodied-AI asset datasets.

Source: https://arxiv.org/abs/2605.05163

Rethinking Reasoning-Intensive Retrieval: Evaluating and Advancing Retrievers in Agentic Search Systems

Reasoning-intensive retrieval differs from topical retrieval in that the target is evidence which supports a downstream inference chain, not surface lexical or semantic similarity. The paper argues that current practice — exemplified by BRIGHT — undersells this distinction in two ways. First, benchmarks anchor each query to a narrow gold set, typically the single passage cited by the original answer, ignoring complementary evidence that a reasoner would actually need. Second, retrievers are evaluated in a single-shot, query-in/passages-out regime, while production agentic systems issue multiple reformulated queries and must accumulate a non-redundant evidence portfolio across turns. The authors address both gaps with a new benchmark (BRIGHT-Pro), a new synthetic training corpus (RTriever-Synth), and a fine-tuned encoder (RTriever-4B) built on Qwen3-Embedding-4B.

BRIGHT-Pro: aspect-decomposed gold and agentic protocol

BRIGHT-Pro re-annotates BRIGHT queries by having domain experts decompose each question into multiple reasoning aspects and label gold passages per aspect. A query q is associated with a set of aspect-labeled positives \{(a_i, P_i)\} where P_i is a non-empty passage set sufficient to discharge aspect a_i. Evaluation then admits two protocols:

- Static: standard nDCG/Recall against the union \bigcup_i P_i, but additionally an aspect-coverage metric measuring how many distinct a_i are hit in the top-k.

- Agentic: a fixed search agent issues up to T reformulated queries through the retriever; per-turn results are merged and judged on cumulative aspect coverage and answer-grounding sufficiency.

The split lets the authors separate two failure modes that single-gold benchmarks conflate: (i) ranking the canonical gold high, and (ii) producing diverse evidence across aspects. Several strong reasoning retrievers do well on (i) but degrade on (ii), and the gap widens under the agentic protocol where redundant top-k behavior wastes turns.

RTriever-Synth: aspect-decomposed synthetic training

The training corpus is built so that supervision rewards portfolio construction rather than single-passage matching. Given a seed query q, an LLM produces an aspect decomposition \{a_1,\dots,a_m\}, and for each a_i generates:

- a complementary positive p_i^+ that contributes evidence for a_i but not for other aspects,

- a positive-conditioned hard negative p_i^- that is topically similar to p_i^+ and looks plausible for q but does not advance any a_i.

Training uses a contrastive objective with multi-positive InfoNCE,

\mathcal{L}(q) = -\frac{1}{m}\sum_{i=1}^{m}\log\frac{\exp(s(q,p_i^+)/\tau)}{\sum_{j=1}^{m}\exp(s(q,p_j^+)/\tau) + \sum_{k}\exp(s(q,p_k^-)/\tau)},

where s is cosine similarity in the encoder space and the denominator pools all aspect positives (treated as in-batch competitors when constructing diverse top-k) together with hard negatives. The conditioning of negatives on the matched positive is the key design choice: it pushes the encoder to discriminate aspect-relevant from aspect-adjacent text rather than relying on broad topical features. RTriever-4B is then a LoRA fine-tune of Qwen3-Embedding-4B on this corpus.

Results

Across BRIGHT-Pro, BRIGHT, and several reasoning-heavy QA suites, three patterns emerge:

- Aspect-aware static evaluation reorders the leaderboard. Models that look strong under standard nDCG@10 lose ground when scored on aspect coverage; the abstract reports that aspect-aware and agentic evaluation “expose behaviors hidden by standard metrics,” with multiple reasoning-tuned retrievers regressing relative to general-purpose baselines on coverage even while leading on canonical-gold nDCG.

- RTriever-4B substantially improves over its Qwen3-Embedding-4B base on BRIGHT-Pro under both static and agentic protocols, and is competitive with or surpasses larger reasoning-intensive retrievers despite the LoRA-only adaptation.

- Under the agentic protocol, gains compound across turns: complementary-positive training reduces redundancy in successive retrievals, so cumulative aspect coverage saturates faster than for retrievers trained on single-passage relevance.

Limitations and open questions

The benchmark inherits BRIGHT’s domain coverage and its expert annotations are still finite per query; aspect decompositions produced by experts may themselves be incomplete and bias the coverage metric. The synthetic corpus depends on an LLM both to decompose aspects and to generate hard negatives, so distillation artifacts (e.g., stylistic regularities in p_i^-) may inflate held-in gains. The agentic protocol fixes one search agent; results may not transfer across agents that rewrite queries differently or that interleave tool calls. Finally, the paper does not directly compare against late-interaction or learned-sparse models tuned for diversity (e.g., MMR-aware reranking), which is the natural baseline for portfolio retrieval.

Why this matters

If retrievers are to serve agentic loops rather than one-shot QA, both training and evaluation need to be portfolio-aware. This work operationalizes that shift with a concrete benchmark, a synthetic-data recipe centered on aspect-conditioned hard negatives, and evidence that single-gold metrics systematically mislead about agentic utility.

Source: https://arxiv.org/abs/2605.04018

Hacker News Signals

Natural Language Autoencoders: Turning Claude’s Thoughts into Text

Anthropic’s post describes a method for mapping Claude’s internal chain-of-thought representations back into natural language, functioning as an autoencoder over latent reasoning states. The core idea: train an encoder that compresses a model’s intermediate activations (or scratchpad token sequences) into a compact natural-language summary, and a decoder that can reconstruct behavior from that summary. This is distinct from standard interpretability probes, which extract scalar features; here the bottleneck is itself natural language, making the latent space human-readable by construction.

The technical setup involves training a secondary model to produce a natural-language “thought summary” such that conditioning Claude on that summary recovers downstream outputs with fidelity close to conditioning on the original full chain-of-thought. Loss is a combination of behavioral fidelity (KL or cross-entropy between output distributions given original vs. reconstructed thoughts) and readability or compression objectives.

The motivation is mechanistic: if a natural-language summary can roundtrip through the model with low reconstruction error, it constitutes a faithful, human-interpretable account of what the model was “actually doing.” This has direct relevance to alignment and oversight — you can audit the summary rather than thousands of attention weights.

Key open questions include whether the summaries capture genuinely causally relevant information or merely correlate with outputs, how compression ratio affects faithfulness, and whether the summaries are robust to adversarial prompting that causes the internal reasoning to diverge from the surface scratchpad. The approach also depends heavily on the quality of the secondary encoder-decoder, introducing a second model whose interpretability is unresolved. Still, as a scalable alternative to activation patching or probing classifiers, it represents a practical step toward legible oversight of extended-context reasoning.

Source: https://www.anthropic.com/research/natural-language-autoencoders

Accelerating Gemma 4: Faster Inference with Multi-Token Prediction Drafters

Google’s post details how multi-token prediction (MTP) drafters are integrated into Gemma 4 to accelerate inference via speculative decoding. The standard speculative decoding setup uses a small draft model to propose k tokens, which the target model verifies in a single forward pass; accepted tokens reduce the effective number of target-model calls. The Gemma 4 variant uses a lightweight MTP head — an auxiliary output head trained jointly with the main model — rather than a separate draft model, avoiding the operational complexity of maintaining two distinct checkpoints.

The MTP head is trained to predict multiple future tokens simultaneously from the same hidden state. At inference, the main model’s final hidden state is fed into the MTP head to generate a draft continuation of length k (typically 4–8 tokens). The base model then verifies all k in one parallel forward pass. Acceptance rate and the resulting wall-clock speedup depend on how well the MTP head’s distribution matches the base model’s; Google reports meaningful throughput gains, particularly on near-deterministic tasks like code generation where acceptance rates are high.

Technically, the MTP head adds minimal parameter overhead — it reuses the model’s hidden representations and adds only a small projection per additional token position. Training uses a weighted auxiliary loss summed over future token positions, which has been shown in prior work (e.g., Meta’s MTP paper) to slightly improve main-task perplexity as a side effect of the richer supervision signal.

Practical implications: single-checkpoint deployment, no draft-model latency mismatch issues, and straightforward integration with existing KV-cache management. Limitations include that MTP heads provide less draft diversity than a separately trained small model, and speedup degrades sharply on high-temperature or creative generation where acceptance rates drop. The approach is most effective in constrained-output regimes.

Source: https://blog.google/innovation-and-ai/technology/developers-tools/multi-token-prediction-gemma-4/

Learning the Integral of a Diffusion Model

Sander Dieleman’s post develops the idea of learning flow maps — direct mappings from noise at time t_0 to clean data at time t_1 that integrate over the entire denoising trajectory, bypassing iterative stepping. Standard diffusion/flow-matching models learn the instantaneous velocity field v_\theta(x, t); inference then requires numerical ODE integration over many steps. The insight here is that the integral \Phi(x_{t_0}, t_0, t_1) = x_{t_0} + \int_{t_0}^{t_1} v_\theta(x_t, t)\, dt can itself be parameterized and learned directly as a neural network.

A flow map F_\theta(x_s, s, t) is trained to satisfy the consistency constraint: F_\theta(x_s, s, t) = F_\theta(F_\theta(x_s, s, r), r, t) for all s < r < t, analogous to the self-consistency condition in consistency models. This is related to but distinct from consistency distillation — here the map is learned over arbitrary time intervals, not just from arbitrary t to t=0.

Training uses a combination of regression against multi-step ODE solutions (distillation) and self-consistency losses. The key difficulty is that the target for a long-interval flow map must itself be computed by composing shorter-interval maps, creating a bootstrapping problem similar to that in TD learning. The post discusses how to stabilize this via stop-gradients and progressive interval expansion.

The practical payoff is one-step or few-step generation with quality closer to many-step ODE solvers than standard consistency models achieve, because the model learns globally coherent trajectory integrals rather than local velocity corrections. Open questions include scaling behavior, whether the consistency constraint can be enforced without expensive ODE rollouts during training, and connections to learned ODE solvers in the neural PDE literature.

Source: https://sander.ai/2026/05/06/flow-maps.html

ProgramBench: Can Language Models Rebuild Programs from Scratch?

ProgramBench is a benchmark probing whether LLMs can reconstruct complete, executable programs from natural-language descriptions of their behavior, framed as a measure of deep program understanding rather than snippet completion. The core task: given a specification (docstring, input-output examples, or both), generate a full program that passes a hidden test suite. Problems span algorithmic challenges, data structure manipulation, and systems-level logic at varying complexity.

The benchmark’s methodological contribution is the distinction between behavioral specification (what the program does) and structural hints (how it does it). Prior coding benchmarks like HumanEval or MBPP often include structural cues in the docstring; ProgramBench deliberately removes them, forcing models to synthesize implementation strategy from scratch. Evaluation is execution-based: pass@k against held-out test cases, with k \in \{1, 5, 10\}.

Results show a steep drop relative to benchmark performance on HumanEval. Even strong frontier models achieve substantially lower pass@1 on harder ProgramBench instances where multi-function programs and non-trivial algorithms are required. The benchmark surfaces a specific failure mode: models frequently generate locally plausible but globally inconsistent programs — correct subroutines that do not compose correctly into a working whole.

The paper also reports that chain-of-thought prompting improves scores modestly but not uniformly, with gains concentrated on medium-difficulty problems. Hard problems remain largely unsolved, suggesting current models struggle with the planning component of long-horizon code synthesis.

Limitations: execution-based pass/fail does not distinguish partial correctness; the test suites themselves may have coverage gaps; and the benchmark skews toward competitive-programming-style problems, which may not represent industrial software tasks. The gap it reveals is real but the generalization to practical coding assistance requires caution.

Source: https://arxiv.org/abs/2605.03546

DeepSeek 4 Flash: Local Inference Engine for Metal

Antirez’s ds4 is a from-scratch C inference engine targeting Apple Silicon via the Metal GPU compute API, designed specifically for DeepSeek’s quantized models. The project avoids llama.cpp and MLX as dependencies, implementing the full forward pass — including DeepSeek’s mixture-of-experts routing, multi-head latent attention (MLA), and grouped-query attention — directly in C with Metal shaders.

The technically notable aspect is the MLA implementation. DeepSeek V2/V3/R1 use low-rank KV compression: keys and values are projected down to a latent dimension d_c \ll d_{kv} before caching, reducing KV cache memory by roughly 10\times at the cost of a small up-projection at attention time. Getting this right in a custom kernel requires careful handling of the absorbed projection matrices — DeepSeek’s inference documentation notes that the RoPE position encoding interacts with the low-rank structure in a non-trivial way, requiring splitting the key into RoPE and non-RoPE components before compression.

For MoE routing, the engine implements top-k expert selection with the shared-expert architecture DeepSeek uses (a subset of experts always active, remainder selected per-token). Metal’s SIMD-group operations are used for the softmax over router logits and for the sparse accumulation across selected expert outputs.

Quantization support targets Q4 and Q8 formats with Metal-side dequantization fused into the matrix multiply kernels, avoiding a separate dequant pass. The result is a minimal, auditable codebase — a few thousand lines — that achieves competitive token throughput on M-series chips for mid-size DeepSeek variants.

Open limitations: no batching beyond batch size 1, no speculative decoding, and Metal’s lack of persistent thread groups makes KV cache management less efficient than CUDA equivalents. Still useful as a reference implementation for understanding DeepSeek’s architecture at the kernel level.

Source: https://github.com/antirez/ds4

Noteworthy New Repositories

Tencent-Hunyuan/HY-World-2.0

HY-World 2.0 is a multi-modal world model targeting three coupled tasks: 3D scene reconstruction from images/video, generative synthesis of novel 3D environments, and forward simulation of scene dynamics. The architecture combines a video diffusion backbone with an explicit 3D representation layer, allowing the model to maintain geometric consistency across generated frames rather than treating each frame independently. The reconstruction pipeline infers scene geometry and appearance jointly, feeding into a latent space that supports both unconditional generation and conditional rollouts given an initial state and action sequence. The simulation component positions this as a potential environment model for embodied agents, where photorealistic and geometrically coherent rollouts reduce the sim-to-real gap. Multi-modal conditioning covers text, images, and depth priors. The release includes model weights, inference code, and evaluation scripts. Technical differentiation from predecessors like HY-World 1.x lies in improved temporal coherence and the unified handling of all three tasks within one model rather than separate specialized heads. Open questions include scalability to unbounded scene extents and the fidelity of dynamic object interactions under occlusion.

Source: https://github.com/Tencent-Hunyuan/HY-World-2.0

facebookresearch/neuroai

NeuroAI is a Python library from FAIR designed to bridge systems neuroscience and deep learning research across visual, auditory, and somatosensory modalities. The core abstraction is a standardized benchmark pipeline: load a pretrained neural network model, extract intermediate representations at specified layers, fit a linear readout to neural population recordings, and evaluate predictive accuracy using standard neuroscience metrics such as explained variance and noise-corrected correlation. It wraps datasets from NeuralBench, Brain-Score, and proprietary FAIR recordings under a uniform API, reducing the boilerplate that typically makes cross-modality comparisons difficult. The library supports RSA (representational similarity analysis) and CKA (centered kernel alignment) for geometry-level comparisons between model activations and neural data without requiring regression. Integration with PyTorch model zoos means any torchvision or HuggingFace model can be evaluated against neural benchmarks with minimal adapter code. Practically, this enables rapid iteration on architecture ablations targeted at neural predictivity rather than task accuracy alone, which is relevant for understanding which inductive biases in deep networks correspond to biological computation.

Source: https://github.com/facebookresearch/neuroai

kyegomez/OpenMythos

OpenMythos is a speculative reverse-engineering exercise that attempts to reconstruct the hypothesized internal architecture of Anthropic’s Claude models from publicly available research papers, blog posts, and technical reports — no proprietary code or weights are involved. The repository translates architectural hypotheses into runnable PyTorch implementations, covering areas such as Constitutional AI reward modeling, multi-step critique-revision loops, and inferred attention patterns consistent with Claude’s reported context lengths and behavioral properties. The value is primarily pedagogical: it forces concrete architectural commitments where the literature is ambiguous, making implicit assumptions explicit and testable. Each module is annotated with citations to the specific papers motivating the design choice. The high star count reflects community interest in interpretable reconstructions of frontier model internals rather than production utility. Significant caveats apply: the actual Claude architecture is undisclosed, so all structural decisions here are informed speculation and should not be treated as ground truth. The project is most useful as a reading companion to the Constitutional AI and RLHF literature, providing runnable skeletons around which the theory can be explored.

Source: https://github.com/kyegomez/OpenMythos

walkinglabs/hands-on-modern-rl

hands-on-modern-rl is an open curriculum structured as a progression from classical tabular and deep RL foundations through to contemporary LLM alignment methods and agentic systems. Early modules cover policy gradient derivations, Q-learning variants, and actor-critic architectures with self-contained Jupyter notebooks. The curriculum then transitions to proximal policy optimization (PPO) and its application in RLHF pipelines, with implementations that mirror the setups used in InstructGPT-style training. A dedicated section on Reinforcement Learning from Verifiable Rewards (RLVR) covers reward modeling when ground-truth signals are available (e.g., math verification, code execution), contrasting it with learned preference models. The agentic track addresses tool-use environments, multi-step reasoning under partial observability, and the specific credit assignment challenges that arise when reward is sparse across long action sequences. Code targets a reproducible stack: Python, PyTorch, and lightweight gym-compatible environments, avoiding heavy infrastructure dependencies. The gap this fills is the absence of a single resource that connects the classical RL mathematical foundations to the specific algorithmic variants now dominating LLM post-training, making it useful for researchers entering the LLM alignment space from an RL background or vice versa.